Your CI/CD Pipeline Is Part of Your Authorization Boundary. Act Like It

A practitioner's guide to making GitHub-to-AWS pipelines survive a FedRAMP Moderate assessment

Most FedRAMP assessments I’ve been involved with treat the CI/CD pipeline as an afterthought. It’s a diagram in the SSP that nobody questions, a data flow that gets hand-waved during interviews, and a set of controls that developers describe in terms the assessor accepts because it sounds technical enough.

That’s a problem. Because your pipeline — the mechanism that puts code into your production environment — is one of the most security-critical components in your entire authorization boundary. And if you’re pulling code from a non-FedRAMP-authorized source like GitHub Commercial, you’ve got a supply chain risk sitting in the middle of your architecture that requires specific, documented mitigations.

I recently built out a comprehensive control mapping for a CI/CD architecture that a lot of CSPs are running: GitHub Commercial (outside the boundary) → Dev/Test environment (inside the boundary) → Production (inside the boundary, manual promotion gate). Here’s what you actually need to get right.

Most FedRAMP assessments I’ve been involved with treat the CI/CD pipeline as an afterthought. It’s a diagram in the SSP that nobody questions, a data flow that gets hand-waved during interviews, and a set of controls that developers describe in terms the assessor accepts because it sounds technical enough.

That’s a problem. Because your pipeline — the mechanism that puts code into your production environment — is one of the most security-critical components in your entire authorization boundary. And if you’re pulling code from a non-FedRAMP-authorized source like GitHub Commercial, you’ve got a supply chain risk sitting in the middle of your architecture that requires specific, documented mitigations.

I recently built out a comprehensive control mapping for a CI/CD architecture that a lot of CSPs are running: GitHub Commercial (outside the boundary) → Dev/Test environment (inside the boundary) → Production (inside the boundary, manual promotion gate). Here’s what you actually need to get right.

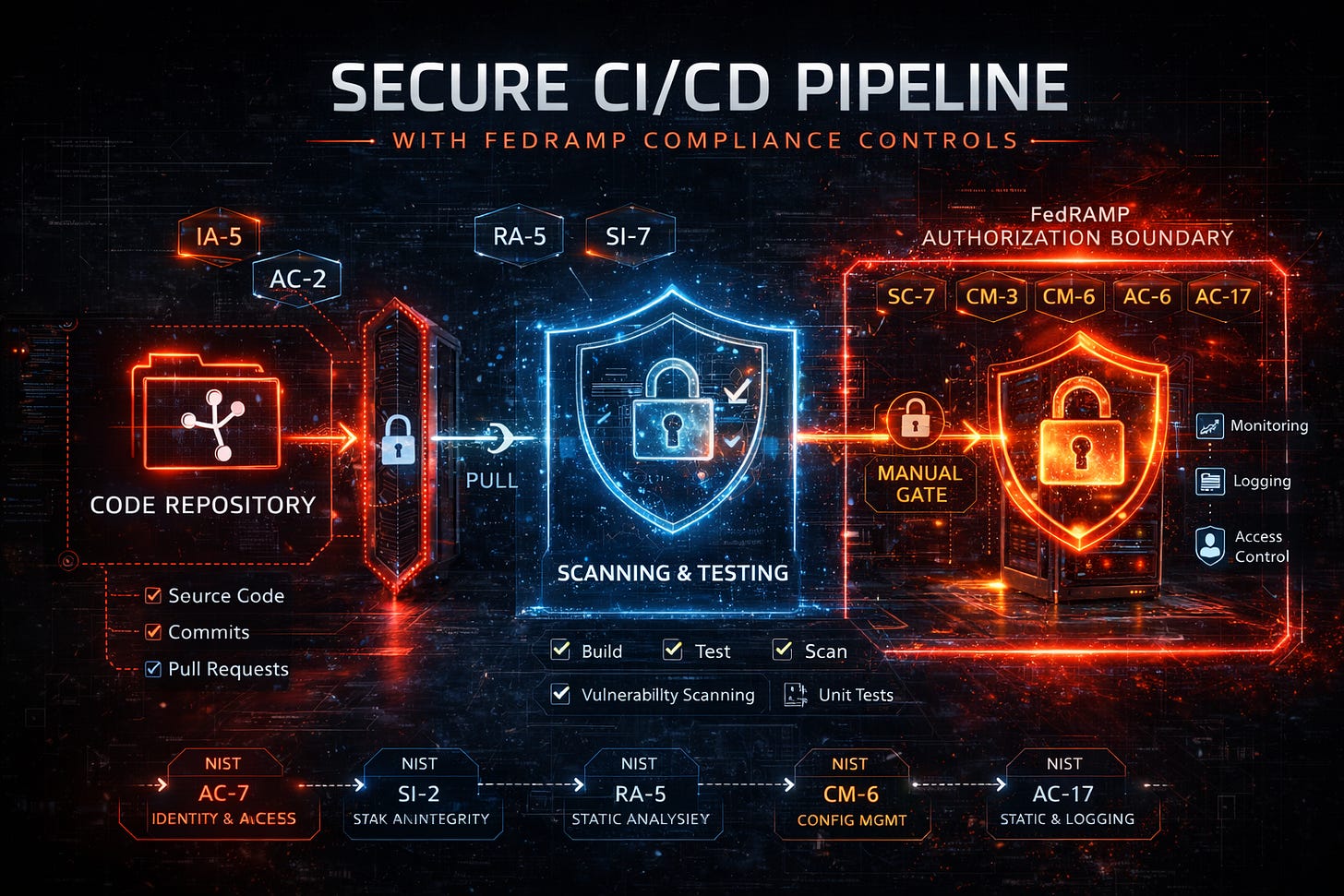

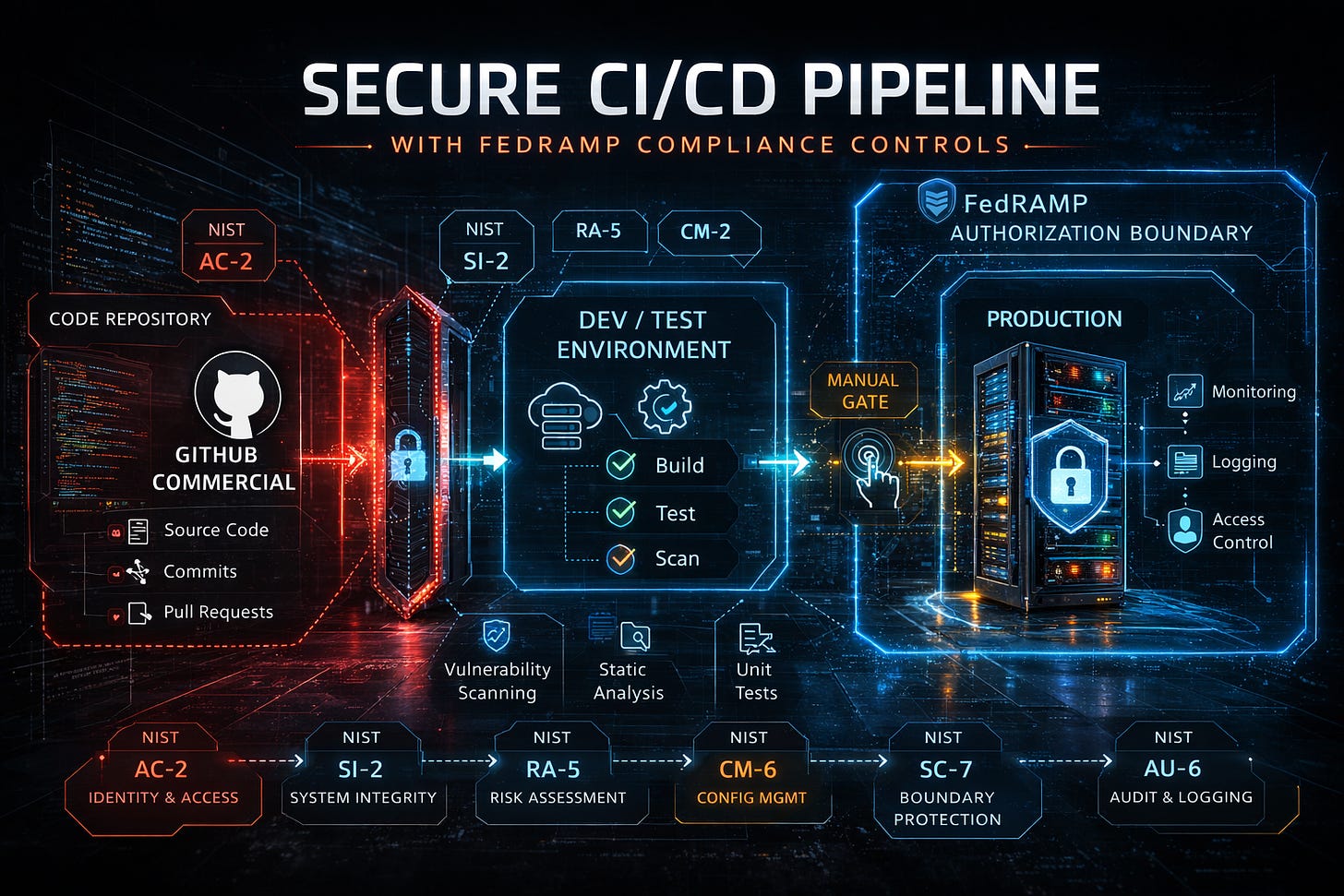

The Architecture

The pipeline has three distinct stages, and each has different compliance obligations:

Stage 1: GitHub Commercial — Your source code repository, sitting outside the authorization boundary on a platform that is not FedRAMP Authorized.

Stage 2: Dev/Test Environment — Inside the boundary. An automated pull brings code in for scanning, testing, and validation. No customer data here. Dev team has no access to production.

Stage 3: Production — Inside the boundary. A manual approval gate controls what gets promoted from dev. This is where federal data lives.

This architecture actually aligns well with the FedRAMP Authorization Boundary Diagram Job Aid, which explicitly shows a Corporate Cloud Dev Subnet with dev/test code analysis capabilities inside the boundary. But “aligns well” and “will survive an assessment” are two different things.

Stage 1: The GitHub Problem

GitHub Commercial is not FedRAMP Authorized. Full stop. That means you need to treat it as an external service with specific documentation requirements.

SA-9 (External System Services) is your anchor control. You must document GitHub as a non-FedRAMP-authorized external service in your SSP. Describe the security controls GitHub provides — encryption in transit, access controls, audit logging — and either accept the residual risk formally or open a POA&M item. Your SSP needs to explain why this is acceptable: only source code flows outbound, no federal data is stored in GitHub.

SA-10 and SA-11 require you to document the full development lifecycle: branching strategy, code review requirements, who has commit and merge authority, how code integrity is ensured before it enters the boundary. If you can’t describe your pull request approval workflow to an assessor, you have a gap.

CM-5 and CM-5(5) are the ones people miss. You need formal management of every GitHub account with push access, and you need quarterly reviews of developer and integrator privileges. Not annual — quarterly. Record your review dates in the SSP. If an assessor asks when you last reviewed who has merge authority on your main branch and you can’t answer, that’s a finding.

AC-22 applies even to private repositories. Ensure no sensitive configuration, credentials, or architectural details that could be exploited are committed. If your repo is public, you need a documented content review process.

Here’s the documentation checklist for Stage 1:

Your Authorization Boundary Diagram must show GitHub as an external service outside the boundary. The ABD Job Aid is explicit — any system or service used in any way to support the CSO must be illustrated in the ABD.

Your SSP Appendix M (Integrated Inventory Workbook) must list GitHub with its authorization status noted as non-FedRAMP-authorized.

You need a risk acceptance statement or POA&M entry documenting the residual risk. Don’t skip this. Assessors look for it.

Stage 2: The Security Gate

This is where untrusted code from outside the boundary gets validated. If Stage 1 is where the risk lives, Stage 2 is where you prove you’re managing it.

The most critical architectural principle: the boundary-side system must PULL code from GitHub, not the other way around. GitHub does not push into your boundary. This follows the same principle FedRAMP applies to update sources — threat feeds and vulnerability definitions are pulled, not pushed, and validated before installation. If your architecture allows GitHub webhooks to trigger deployments directly into your boundary, you need to rethink that flow.

SC-8 and SC-13 require FIPS 140-validated cryptographic modules for all data in transit across the boundary. If you’re on AWS GovCloud, you inherit this. If you’re on commercial AWS, you need explicit FIPS endpoint configuration. Document the specific cryptographic modules and their CMVP validation status. Assessors will ask.

SI-7 (Software & Information Integrity) is where your pipeline proves its value. Verify integrity of every code pull: validate commit signatures, webhook payload HMAC signatures, or signed tags. The pipeline should automate this on every build, with monthly integrity scans as the floor.

RA-5 requires SAST, DAST, SCA (software composition analysis), and dependency scanning in the dev environment. Monthly OS, web application, and database scans are the FedRAMP minimum. Your pipeline should scan on every build — if you’re only doing monthly, you’re passing compliance but failing security.

CM-3 is the control that connects your pipeline to your change management process. Every code change flowing through the pipeline is a change. You must perform a Security Impact Analysis before planned changes. Here’s the part most CSPs get wrong: if the analysis concludes the change adversely affects the system’s authorization integrity, you must treat it as a significant change requiring AO coordination and 3PAO involvement. That means your pipeline needs a mechanism to flag changes that could trigger the significant change process.

AU-2 requires continuous logging of all pipeline events: who triggered the pull, what commit was pulled, build results, scan results, pass/fail decisions. Pipe everything to your SIEM. If an assessor can’t trace a specific production deployment back through your pipeline to the original commit and the person who approved it, your audit trail has a gap.

CM-8(3) requires automated detection of new assets with a maximum five-minute delay. If your pipeline spins up build containers or deploys new components, your inventory system must detect them within five minutes. This catches a lot of organizations off guard.

Network segmentation matters here too. The dev subnet must be logically separated from production. No direct network path to production data stores. The FedRAMP ABD template shows distinct subnets for a reason — your dev environment should not be able to reach production databases even if a developer tried.

Stage 3: The Manual Gate

The manual promotion gate between dev and production is actually a compliance strength. It demonstrates human oversight and separation of duties. But it only works if you implement it properly.

CM-3 applies again at the promotion gate. Document who has authority to approve production promotions, what they verify (scan results clean, testing passed, security impact analysis completed), and how approval is recorded. This should be auditable — an assessor should be able to pick any production deployment and see who approved it and what evidence they reviewed.

AC-5 (Separation of Duties) is non-negotiable. The developer, the person approving the pull into dev, and the person approving production promotion should ideally be different individuals. At minimum, the developer and the production approver must be different people. Document this in your SSP. Enforce it technically — role-based access controls, not just policy.

AC-6 (Least Privilege) means production deployment credentials should be tightly scoped. No developer should have production deployment permissions. The promotion mechanism itself should use a dedicated service account with the minimum permissions needed to deploy.

SI-2 requires security-relevant updates to be installed within 30 days of release. That means if a vulnerability is discovered and a patch is developed, your pipeline needs to get that patch through dev, tested, approved, and promoted to production within 30 days. Your manual promotion process needs a documented SLA for security patches.

Cross-Cutting Requirements

Some controls span all three stages and must be documented holistically:

Cryptographic requirements: All data in transit across and within the boundary must use FIPS 140-validated modules. All data at rest — artifacts, source code in S3, CodePipeline artifact stores — must be encrypted with FIPS-validated modules. The FedRAMP Policy for Cryptographic Module Selection allows update streams over validated-but-outdated modules when addressing known vulnerabilities, but CAVP-validated algorithms are strongly preferred.

Incident response: A compromise at any pipeline stage — GitHub account takeover, poisoned dependency, unauthorized code promotion — must be handled under your IR Plan. Report per FedRAMP Incident Communications Procedure and US-CERT timelines.

Continuous monitoring deliverables tied to your pipeline:

Continuous: Auditable event monitoring, asset detection (5-min max delay)

Monthly: Vulnerability scanning (OS, web, DB), POA&M updates, integrity scans, least functionality review

60 Days: Authenticator/password refresh for pipeline service accounts

Quarterly: Developer privilege review

Annually: Baseline configuration review, CM Plan update, SSP update, penetration testing (3PAO), account recertification

The Elephant in the Pipeline: AI-Generated Code

Here’s what my original compliance mapping didn’t address — and what almost nobody in the FedRAMP community is talking about yet: what happens when the code flowing through your pipeline wasn’t written by a human?

GitHub Copilot, Amazon Q, Cursor, Claude Code, and a growing fleet of agentic AI coding tools are now embedded in developer workflows across the defense industrial base. GitHub’s own Copilot coding agent can now autonomously pick up issues, write code, run security scans, self-review, and open pull requests — all without a human touching the keyboard. That’s not a future problem. That’s happening in repositories that feed FedRAMP-authorized production environments right now.

The compliance implications are significant, and none of the current FedRAMP control baselines were designed with this in mind.

The integrity problem. SI-7 requires you to verify integrity of code entering the boundary. But what does “integrity” mean when an AI generated the code? Commit signature verification tells you which account committed the code, not whether a human reviewed what the AI produced. Research from the Chinese University of Hong Kong demonstrated that Copilot can be induced to leak secrets from its training data through targeted prompts. A separate study found that roughly 30% of Copilot-generated code snippets contained security weaknesses spanning 43 different CWEs — including eight from the CWE Top-25.

Your SAST and SCA scanners in Stage 2 are your safety net, but they were calibrated for human-written code patterns. AI-generated code introduces vulnerabilities at a different velocity and distribution. If your scanning tools aren’t tuned for AI-specific weakness patterns, you’re catching less than you think.

The supply chain risk. Your document talks about SA-9 for GitHub as an external service. But Copilot adds another external service layer — one that’s pulling context from your entire codebase and sending it to Microsoft/OpenAI’s infrastructure for inference. On the free tier, user interactions may be used for model training. If a developer pastes proprietary architecture details, API keys, or configuration logic into a Copilot prompt, that data has left your control. GitGuardian reports that repositories using Copilot leak secrets at a 40% higher rate than traditional development.

For FedRAMP purposes, you need to document AI coding assistants as an additional external service under SA-9, separate from GitHub itself. Describe what data flows to the AI service, what controls the AI vendor provides, and what residual risk you’re accepting. If developers are using Copilot Business or Enterprise, document the data handling differences. If anyone is using the free tier on code that touches your authorization boundary — that’s a finding.

The separation of duties gap. AC-5 requires separation between the developer and the production approver. But what happens when Copilot’s coding agent autonomously generates code, opens a PR, and self-reviews it? The “developer” is now an AI, and the first human to see the code may be the person approving the pull into your dev environment. Your separation of duties model needs to explicitly address AI-authored commits. At minimum, AI-generated code should be flagged in your pipeline and require human review before entering the authorization boundary — not just before production promotion.

The prompt injection vector. Just two weeks ago, Orca Security disclosed a vulnerability where attackers could inject malicious instructions into a GitHub Issue that would be automatically processed by Copilot when launching a Codespace, potentially leaking the user’s GitHub token and enabling repository takeover. This is a supply chain attack that weaponizes the AI coding tool itself. Your pipeline’s integrity verification at the boundary crossing (SI-7) needs to account for the possibility that the AI assistant was compromised or manipulated before the code was even committed.

What your SSP needs to address:

Document every AI coding tool used by developers who contribute code to the CSO. Treat each as an external information system service under SA-9 with specific data flow documentation. Update your SA-11 (Developer Testing) to describe how AI-generated code is identified, flagged, and subjected to additional security review. Ensure your RA-5 scanning covers AI-specific vulnerability patterns. Add AI-generated code review requirements to your CM-3 change control process. And brief your developers — the person signing the executive affirmation needs to know that AI is writing code that enters the authorization boundary.

This isn’t a theoretical future concern. It’s a current gap in almost every FedRAMP SSP I’ve reviewed. The CSPs that get ahead of it now will have a significant advantage when assessors start asking these questions — and they will.

The Bottom Line

Your CI/CD pipeline isn’t just a DevOps convenience. In a FedRAMP Moderate environment, it’s a security-critical component that touches boundary protection, supply chain integrity, change management, access control, and continuous monitoring. Every stage has specific control obligations, and the connections between stages are where most compliance gaps hide.

If you’re a CSP running this architecture and your SSP describes the pipeline in two paragraphs, you’re not ready for assessment. If you’re an assessor and you’re not walking the pipeline end-to-end during interviews, you’re not doing a thorough job.

The pipeline is the boundary. Treat it like one.